Articles

- Page Path

- HOME > Sci Ed > Volume 8(2); 2021 > Article

-

Review

Artificial intelligence-assisted tools for redefining the communication landscape of the scholarly world -

Habeeb Ibrahim Abdul Razack1,2

, Sam T. Mathew3

, Sam T. Mathew3 , Fathinul Fikri Ahmad Saad1,4

, Fathinul Fikri Ahmad Saad1,4 , Saleh A. Alqahtani5,6

, Saleh A. Alqahtani5,6

-

Science Editing 2021;8(2):134-144.

DOI: https://doi.org/10.6087/kcse.244

Published online: July 27, 2021

1Faculty of Medicine and Health Sciences, Universiti Putra Malaysia, Selangor, Malaysia

2Department of Cardiac Sciences, College of Medicine, King Saud University, Riyadh, Kingdom of Saudi Arabia

3Researcher and Medical Communications Expert, Bangalore, India

4Nuclear Imaging Unit, Hospital Pengajar Universiti Putra Malaysia, Selangor, Malaysia

5Division of Gastroenterology and Hepatology, Johns Hopkins University, Baltimore, MD, USA

6Liver Transplant Center, and Department of Biostatistics, Epidemiology and Scientific Computing, King Faisal Specialist Hospital and Research Center, Riyadh, Kingdom of Saudi Arabia

- Correspondence to Sam T. Mathew sam.t.mathew@outlook.com

Copyright © 2021 Korean Council of Science Editors

This is an open access article distributed under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Abstract

- The flood of research output and increasing demands for peer reviewers have necessitated the intervention of artificial intelligence (AI) in scholarly publishing. Although human input is seen as essential for writing publications, the contribution of AI slowly and steadily moves ahead. AI may redefine the role of science communication experts in the future and transform the scholarly publishing industry into a technology-driven one. It can prospectively improve the quality of publishable content and identify errors in published content. In this article, we review various AI and other associated tools currently in use or development for a range of publishing obligations and functions that have brought about or can soon leverage much-demanded advances in scholarly communications. Several AI-assisted tools, with diverse scope and scale, have emerged in the scholarly market. AI algorithms develop summaries of scientific publications and convert them into plain-language texts, press statements, and news stories. Retrieval of accurate and sufficient information is prominent in evidence-based science publications. Semantic tools may empower transparent and proficient data extraction tactics. From detecting simple plagiarism errors to predicting the projected citation impact of an unpublished article, AI’s role in scholarly publishing is expected to be multidimensional. AI, natural language processing, and machine learning in scholarly publishing have arrived for writers, editors, authors, and publishers. They should leverage these technologies to enable the fast and accurate dissemination of scientific information to contribute to the betterment of humankind.

- The term artificial intelligence (AI), first coined in 1956 by John McCarthy—known as the father of AI [1]—is widely described now as any thoughtful application of advanced computer sciences in executing tasks and processes that are usually related to intelligent beings [2]. Across industries, the world’s nations are in a rapid race to scale up their AI capacity. A 2019 Accenture report found that over 80% of 1,500 executives representing 12 technologically developed economies were aware of AI’s potential in attaining development objectives [3]. Three-fourths of them contemplate losing business if AI is not implemented and scaled by 2024. According to “The state of AI in 2020” report, the recent coronavirus disease 2019 (COVID-19) pandemic has not prevented high-performing organizations from investing in AI [4].

- In another study of 2,700 professionals from seven developed countries, Boston Consulting Group observed that China was the emerging leader on the path to an advantage in the AI field, with 85% of organizations either piloting or implementing AI. China excels and surpasses many developed countries in the duel of AI adoption in niche sectors, including healthcare, technology, and publishing [5].

- AI is expected to have a tremendous influence on publishing, an age-old industry. It may help develop “smart publishers” by aiding humans to accomplish complex editorial tasks, such as analyzing large quantities of data, making predictions and forecasts, suggesting decisions based on real-time information, and continuously amplifying performance [6]. With the emergence of new service providers and the unveiling of unique technical add-ons to existing platforms, AI is becoming a noticeable sensation also in scholarly and academic publishing [7]. The first-ever pilot prototype publication of a machine-generated science book became a reality in 2019 [8].

- In the scholarly publishing space, AI-based algorithms have enabled the innovative exploration of scientific content and help redefine the role of science communication experts in the years to come. The editor-in-chief of a medical journal with more than 100 volumes published to date intriguingly proposed that “writing machines” will draft scientific manuscripts in the imminent future, while “reviewing machines” will appraise them [9]. Reducing human errors and meeting stringent timelines are vital targets for the success of scholarly publication projects. AI tools can help overcome obstacles that publication professionals currently encounter. AI has the latent potential to unravel these challenges by considerably decreasing the time and efforts expended on simple, monotonous, least-impact, routine tasks and providing extended time to think, explore, and work on multifaceted scholarly processes [10].

- A 2019 multinational survey of around 300 senior leaders and editors (mean age, 41 years; mean experience, 13 years) from 17 countries analyzed the challenges and benefits of using AI in media and publishing houses of general interest [11]. There were also several takeaways for the scholarly community. Some key results, such as increased readability, easy navigation, enhanced content discoverability, improved decision-making, automated complex processes, compliance with standards, and reduced human workload, are crucial for introducing aspects of AI in scholarly publishing. However, the cost factor would be the most significant hurdle for small publishers and standalone journals, although monetary benefits are apparent even for minimal AI investments.

- Although AI has attained attention-grabbing predictions for its potential to serve as a “research advisor” [7], the familiarity and understanding of its role in healthcare remain in the nascent stage; only 60% of respondents in a recent survey were acquainted with this technology [12]. It is more likely for the scholarly communications universe that a significant proportion of science and medical writers across the globe may not be “AI-literate”. Knowledge and awareness of AI-supported innovations are essential even for other stakeholders, such as publishers, editors, reviewers, and readers.

- In this article, we have listed and summarized various AI and other associated tools currently in use or development for a range of publishing obligations and functions that have brought about or can soon leverage much-demanded advances in scholarly communications. This detailed review focuses on the contributions of AI to various scholarly publishing tasks, such as literature review, information retrieval, systematic data syntheses, manuscript development (writing, editing, and revising), bibliography and citation management, target journal selection, plagiarism prevention, peer review, quality assessment, editorial workflow management, and publication production (including proofreading and dissemination).

Introduction

- A literature review forms the base of any publication project involving evidence-based research. According to the AI-powered Dimensions tool, 5,670,475 articles were published in 2019 and 6,166,992 in 2020 [13]. Roughly 20% of science journal articles come from China [14]. With the ever-increasing number of research productions, particularly in today’s infodemic era, data handling has become a cumbersome, tiring, and time-consuming task. AI excels at extracting signals from large volumes of noisy data and may help us find key information from the expanding academic literature.

- A fascinating product, COVID-19 Primer, uses intelligent natural language processing (NLP) to mine databases and generate daily research output trends. As of April 3, 2021, slightly over a year after the pandemic outbreak, more than 127,000 research papers have been published about COVID-19 itself [15]. The retrieval of accurate and sufficient information is thus desirable to achieve milestones. AI-assisted search engines may empower transparent and proficient data extraction tactics; for instance, tools like COVIDScholar and CLARA help learn about COVID-19 related research information [16,17]. A recent hackathon-style randomized controlled trial (RCT) concluded that an AI-led review of medical literature could result in “focused searches” [18]. However, complying with adequate standards in using NLP and machine learning (ML), such as goodness-of-fit measures, cross-validation procedures, and sensitivity and specificity thresholds for search classification, are fundamental to obtain reproducible, dependable, and precise search results in comparison with conventional searches [19]. In particular, ML approaches can struggle when the structure of the underlying data is not consistent.

- Semantic Scholar uses AI to mine the information available in published articles and provides users access to supplementary information to reproduce the results [20]. Wizdom.ai from Taylor & Francis deep-searches journal databases and connects data from various domains and concept areas [21]. Iris.ai follows a distinct strategy by sorting topic-based contents in the CORE database (with over 134 million research articles), amalgamating three different algorithms to generate “document fingerprints,” and then positioning the results based on relevance. Another tool from the same team, the blockchain-based Aiur, may understand the published content, compare it with other similar publications, and check and authenticate hypotheses [22]. Omnity, a multilingual AI tool, helps in the semantic data extraction of scholarly articles and patents in over 100 languages [23]. GrapAL applies NLP principles to a Neo4j graph database to identify inter-domain connections and generate citation-based metrics [24].

- Marshall and Wallace [25] list several notable AI-based tools that are in use for systematic review automation: RobotSearch and RCT Tagger for filtering RCTs; Thalia for the conceptual search and indexing of PubMed articles; RobotAnalyst and SWIFT-Review for obtaining topic-modeled search results; and ExaCT, RobotReviewer, and NaCTeM for data mining and automatic extraction of data elements. RobotReviewer, the ML-based evidence synthesis tool, automatically identifies critical RCT information, including the PICO (population, intervention, comparison, and outcome), design, and risk of bias, from research publications. Scholarcy provides meaningful AI-created summaries for research articles. This helps authors and science writers quickly understand the essential study-related information, such as settings, population, and findings [26].

- The use of semantic search in literature review goes beyond text-based approaches. SourceData looks for figures and legends in research publications, extracts metadata, compares and connects to similar images, and generates a “searchable knowledge graph”. It helps connect the traditional visual and textual account of research results to an ML-assisted depiction of data and hypotheses [27].

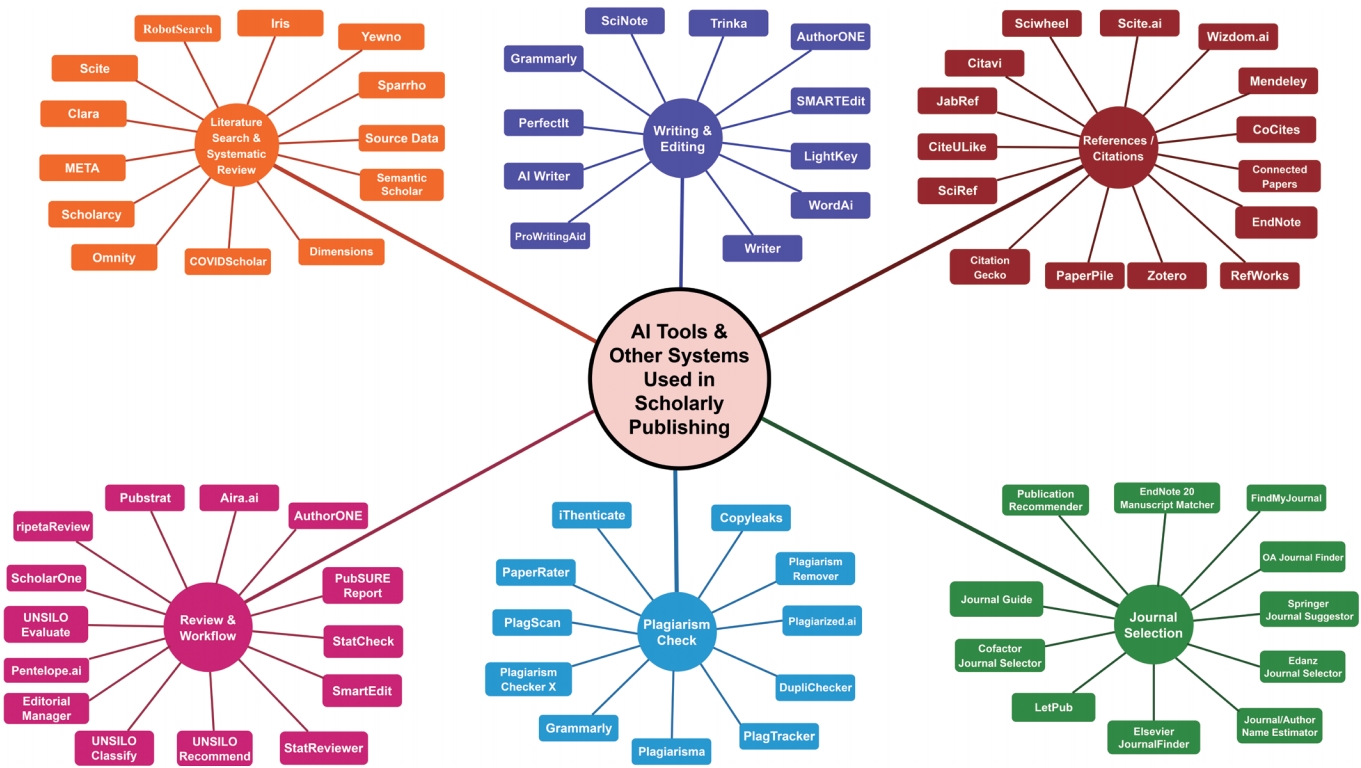

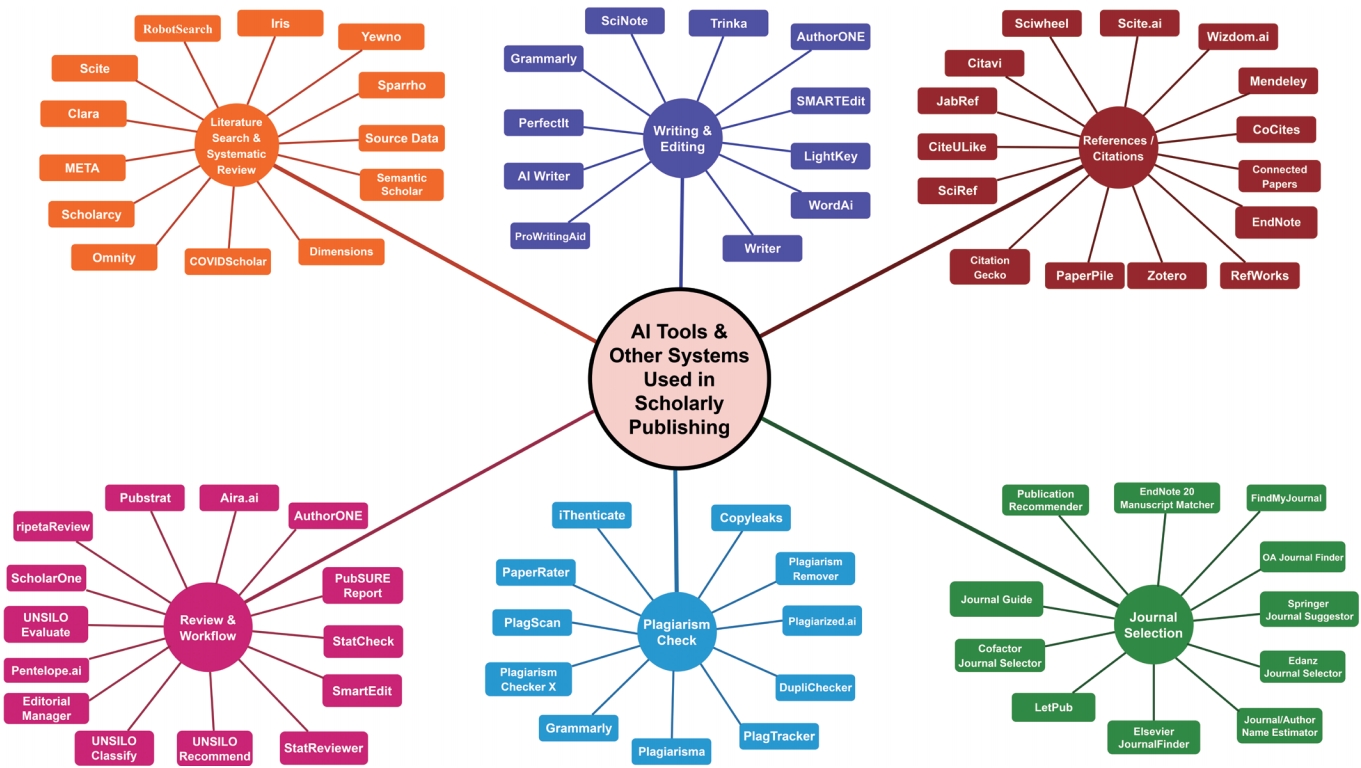

- Fig. 1 portrays various AI tools and certain associated non-AI solutions that help scholarly publishing stakeholders simplify their tasks and excel in different functions.

Literature Search and Information Retrieval

- “Writing robots” have made their way into mainstream journalism and literary creation in China. In 2019, Zhao et al. enumerated several Chinese robotic writing tools that include Dream Writer (Tencent News), Kuai Bi Xiao Xin and Inspire (Xinhua News Agency), Xiao Ming Bot (Beijing Byte Jump Technology Co., Ltd), A Tong and A Le (Guangzhou Daily), and others. These tools perform independent “robot journalism” by generating reports based on structured data (original writing) and creating entirely new content by mixing up and rewriting existing stories (creative writing) [28]. AI algorithms develop summaries of scientific publications and convert them to plain-language texts, press statements, and news stories [29,30].

- Recent NLP breakthroughs, particularly the development of transformer language models, have significantly enhanced the output quality that AI algorithms can generate. The exact nature in which AI-generated text is combined with human input is still being defined.

- Several AI-backed writing assistant tools have recently emerged in the market. They are widely diverse in their scope (such as medical, marketing, legal, business, academic, and scholarly writing) and scale (fully augmented, semi-automated, and simpler bots). G2, a software reviewing portal, has scored 39 such tools with varying scopes, including LightKey, WordAi, After the Deadline, PerfectTense, Writer, and AI Writer [31]. A few tools serve as “writing platforms”, which authors and writers use to develop content. The others are mere checkers and bots that support writing activity outside their platform by suggesting modifications.

- Interestingly, like the highly-rated Grammarly, some products have extensively been used in academic settings and scholarly research with positive feedback [32]. PerfectIt, another example, can be customized to suit any in-house style. Besides regular grammar checks, it focuses on abbreviations, style guides, and consistency in table/figure order and headers [33]. ProWritingAid serves as a grammar checker (including avoidance of overused words and suggestion of word combinations, repeats, and echoes), a style editor (including structure, length, and transition), and a “writing mentor” (providing tips for readability and consistency). It combines recommendations, texts, audio-visuals, and quizzes to make writing entertaining and interactive [34].

- Trinka, developed by Crimson.ai, is specially designed for academic and technical writing. Beyond its functions in correcting grammatical errors, it helps authors develop submission-ready documents. It auto-edits documents and provides corrections in track-changed versions. In addition to consistency checks similar to PerfectIt, Trinka offers a publication-readiness review [35]. AuthorONE, Crimson.ai’s other flagship product, performs a comprehensive assessment by checking over 60 items to finalize a submission-ready draft [36]. SciNote Manuscript Writer extracts data from references using keyword search and adds them to the draft adequately cited and annotated. Although the tool provides intellectual content for writing literature-based sections like introduction, the most creative and crucial part of the manuscript, the discussion section, requires a human touch [37].

Manuscript Preparation

- The use of recent, relevant, and quality references is pivotal for a good science publication. Nonetheless, preparing the references section and checking the accuracy of in-text citations often becomes tedious for a busy researcher. AI applications render much help in this domain. They are even capable of influencing citation patterns [38]. Wizdom.ai comes with an in-built AI-strengthened “citation recommender.” With the power of intelligent analytics backed by big data, this tool is developed to showcase a research article’s “projected citation impact” for 3 to 5 years. It can also highlight emerging research areas and concepts in various science domains and visualize evolving trends [21].

- Scite.ai is an ML platform that uses a “smart citation” feature to analyze the quality of references and list publications with editorial notices, errors, retractions, or disputes. It helps researchers understand scholarly citation practices by revealing the citation’s milieu and providing evidence with supporting or disputing contexts. Scite.ai has recently been introduced as a plug-in for Zotero, a reference management tool [39]. SciWheel uses a similar “SmartSearch” algorithm to recommend relevant articles in Microsoft Word and Google Docs plug-ins [40]. Tools like CoCites, Citation Gecko, and Connected Papers identify research publications by two innovative, unconventional methods named “co-citation” (two research articles appearing together in a single reference list) and “bibliographic coupling” (two papers citing a single publication) [41]. Connected Papers suggests prior and derivative works of input papers and builds a visual graph of related publications, which is of great use in research fields with evolving and novel developments (e.g., AI or COVID-19) [42]. Meta, one of the leading literature search portals, employs predictive algorithms to identify relevant papers, rank them by eigenvector centrality, and automatically integrates with the Mendeley reference manager [43].

- Automation features in EndNote 20 include deduplication of articles, bulk reference updates, auto-import of files, metadata extraction from imported files, and categorization of files into groups [44]. The other leading names in reference management do have their share of intuitive value additions that simplify referencing and citations—Citavi automatically adds citations and thus prevents plagiarism, JabRef automatically renames and sorts related files as per the user’s rules, and CiteULike has an automated publication recommendation feature [45-47].

Bibliography and Citation Management

- Choosing the right journal is a challenging task, mainly because of the influence of various factors, such as the existence of too many journals, high rejection rates in reputed journals, and the substantial emergence of the predatory market. An estimated 30,000 scholarly journals are published annually [48]; fishing the right one out of this colossal ocean requires fulfilling multiple criteria and applying thoughtful selection strategies. On the contrary, web-only, open access (OA) journals tend to disappear from the online space. A recent preprint reported that some 174 journals vanished between 2000 and 2019, raising concerns over preserving research information for a longer time [49]. Digitalization, particularly the OA model, has also been accused of bringing more predatory players who do not follow good publication practices. Duc et al. [50] warned of the penetration of predatory journals even in popular databases like Web of Science, Scopus, and PubMed.

- Selecting a good journal is thus a serious obligation with long-term implications for publishing laborious research work and must follow a thorough checking of various key factors: scientific rigor, transparency in editorial and peer review process, policies on different editorial functions, reputation, and impact [51]. Many renowned journals have high rejection rates—reaching as high as 97% [52]—making the target journal selection process an arduous task for researchers and authors. An appropriate journal for a manuscript is chosen based on the merit of the written manuscript. AI-based interventions thus have a role to play, although there are plenty of simple, straightforward web-based solutions on the market (Fig. 1).

- OA Journal Finder from Crimson.ai follows a search algorithm using a “validated journal index” supported by the DOAJ (Directory of Open Access Journals) and avoids predatory journals [53]. The company’s other tool, FindMyJournal, employs an intelligent algorithm to search a large pool of journals (over 29,000 journals) using researchers’ responses to 11 objective questions and suggests the top five journals for submission [54]. The “manuscript matcher” function in EndNote 20 applies complex algorithms, Web of Science information, and statistics from Journal Citation Reports to suggest impactful journals by providing the “match score” to shorten the target journal search. This value-added search option is also integrated with the EndNote 20’s Microsoft Word plug-in, “cite while you write” [55]. Elsevier’s JournalFinder involves clever search know-how supported by the in-house built “fingerprint engine” and subject-specific vocabularies to identify the correct journal choice [56].

Target Journal Selection

- Plagiarism was first believed to be reported during 40 to 140 AD, when Fidentinus, a Roman poet, recited a poem penned by Martial without the latter’s acknowledgment [57]. Since then, the act of plagiarism has evolved beyond a mere copypaste issue. In the digital era, manual plagiarism detection is not viable. The introduction of trouble-free access to online sources has made it easy for researchers, especially in their early career and from non-English speaking communities, to commit plagiarism, resulting in academic disrespect, credibility damage, manuscript retractions, and a compromised reputation [58].

- The use of general web crawlers and search engine optimization tools may not be sufficient for checking plagiarism in academic and scholarly publications. The availability of several text-modification tools has even made things worse by helping authors to evade plagiarism detection. Similar to the role of innovative writing assistants, AI-powered algorithms and tools can help detect plagiarism, as they outweigh general web crawlers by identifying content similarity at different levels with the assistance of cloud computing and big data [59,60].

- Exciting studies and promising results are emerging in this domain; highly-capable AI solutions are being designed to tackle the infiltration of plagiarism in scholarly publishing [61]. Some novel tools detect plagiarism in multiple languages, bar chart images (using optical character recognition), and paraphrased contents [62,63]. Sahu [64] used the k-nearest neighbor algorithm, an ML method, to recognize patterns and identify plagiarized content based on similarity. Chitra and Rajkumar [65] developed an ML-based paraphrase recognizer that could extract lexical, syntactic, and semantic information from texts, with a favorable outcome in passage-level searches.

- CopyLeaks, also powered by ML, helps detect plagiarism in over a hundred languages [66]. Plagiarism Rater applies NLP principles to parse and extract textual content [67]. Ithenticate, a market leader in the scholarly publishing space, provides a side-by-side comparison of text content and source materials and presents a “similarity index” as a percentage, indicating the amount of copy-pasted matches [68]. Interestingly, writing assistants like Grammarly and ProwritingAid also have plagiarism checking features [32,34].

- There have been suggestions to implement AI-supported stylometry to detect plagiarism as each author has his or her own “writing fingerprint” [69]. Despite the novelty of this concept, it may only be suitable for academic writing or scholarly publications with a single author. Manuscripts with multiple authors may involve feedback from all stakeholders, and thus a stylometric analysis may not be feasible.

Plagiarism Prevention

- The ever-increasing demand for peer reviewers has caused a “review imbalance” in the scholarly publishing domain. According to a 2016 report by Kovanis et al. [70], over 90% of review tasks are handled by only 20% of researchers. The COVID-19 pandemic has further highlighted this gap and stressed the need to streamline and complement the existing review process, primarily due to the need to repurpose drugs for new treatments. The role of NLP-driven AI in peer review is thus considered highly significant, as it can perform feature- and profile-based matching of reviewers and involve a bias-free selection of potential reviewers [71,72].

- AIRA from Frontiers has become one of the first AI-supported tools used for peer review of scholarly manuscripts; it reviews and recommends 20 suggestions for grammar and style, figures and legends, and plagiarized content, apart from providing warning about conflicts of interest [73]. PubSURE Report, an AI-backed assessment tool trained with millions of published articles, can examine for “reporting hygiene” related to readability, adherence, and comprehensiveness [74]. ripetaReview performs similar checks in addition to inspecting “reproducibility variables” and analysis methods [75].

- Ghosal et al. [76] proposed an automated system to assist editors in decision-making by weighing the merit of a submitted manuscript using trained ML classifiers. Mrowinski et al. [77] used Cartesian genetic programming to develop an artificially evolving method that reduces the peer-review time by about 30% without increasing the reviewer base. Nevertheless, automated peer review systems, trained with the previously accepted manuscripts of any particular journal, can pose possible “in-built biases” [78].

- In 2013, Nuijten et al. [79] assessed the statistical quality of manuscripts published in eight psychosocial journals over the previous 28 years. The results revealed that at least half of them had statistical errors, with serious faults in one of eight papers. This encouraged the group to develop StatCheck, an exclusive tool to help psychology journal editors detect statistical errors in submitted manuscripts. StatReviewer, another decision support tool integrated into Editorial Manager, examines the use of correct statistical approaches in manuscripts and helps recognize deceitful conduct. It checks for obvious numerical errors and highlights concerns related to quality, style, and reporting methodology [80].

Peer Review and Quality Assessment

- Kim et al. [81] compared nine manuscript management systems concerning authors, reviewers, and editors’ viewpoints on various areas such as registration, authority control, file uploading, input keyword, input metadata, and review process communication. They suggested improvements in functionality and highlighted the need to simplify editorial tasks at multiple levels.

- A survey of editorial offices by UNSILO.ai claimed that over 85% perform pre-review technical checks, and over 65% manually cross-verify details between forms and manuscripts submitted [82]. This AI player has introduced an innovative tool, UNSILO Evaluate, which is integrated into ScholarOne, the leading editorial workflow management system from Clarivate Analytics, to conduct technical checks at manuscript screening and reduce the substantial time and efforts invested into this process [83]. Even publishers that use in-house submission and workflow management systems check and correct references in submitted manuscripts using its AI-supported auto-analyzer tool [84]. Penelope.ai has the same functionality and matches a journal’s specific requirements for references [78]. AuthorONE checks authorship declarations, ethical compliance, inclusive language, and word count reduction [36]. Pubstrat features automated workflow management alongside an option for automated citation creation [85].

- Meta’s bibliometric intelligence, which is integrated into Editorial Manager, applies ML algorithms (trained on its corpus of scientific collections) and estimates the “future citation count” of a newly submitted manuscript. This helps editors to choose priority manuscripts and allows for triaging and ranking. Interestingly, in extensive studies, Meta’s bibliometric intelligence out-performed (by 2.5 times) pre-publication impact prediction by editors [86]. Cenveo Publishing uses NLP in its SmartEdit tool for copy editing and proof generation and rationalizes the production process by converting texts into XML format [87].

- UNSILO.ai’s other products help publishers in production and post-publishing activities; UNSILO Classify, through its ML technology, helps develop topic-based content packages, while UNSILO Recommend offers content recommendation features to improve click and retention rates [88]. ML can enhance post-publication discoverability by providing high precision recommendations [89].

- Publishing houses in China use AI to design layout, format contents, choose and acquire the right images, annotate, identify speech and objects, and index [90]. Similar principles are being applied in scholarly publications, wherein journal-specific formatting, an overburdened process, can be managed using automation to prevent irregularities possibly missed due to human oversight [91]. However, automated proofreading tools may not be free from inaccuracies [92].

Editorial Workflow and Publication Production

- The recent decades have seen a soaring number of articles published, unquestionably urging stakeholders to adopt AI in the future [93]. Predatory OA journals with low article-processing charges and rapid dissemination in online platforms not only malign academic integrity, but also add compromised content to the vast existing pool of scholarly articles [94]. The COVID-19 pandemic has exacerbated this scenario further, with about 367 pandemic-related articles published per week with a 6-day median lead time to acceptance (vs. 84 days for other topics) [95]. There are two crucial paths forward for AI: prospectively improving the quality of publishable content and adopting retrospective checks for existing content in the public domain to identify missed obligations and correct them for better use [96]. As discussed in various sections of this manuscript, ML is certainly not new to scholarly publishing. Content enrichment and algorithm-based searches are two niche areas of semantic technology that can yield enhanced search discoverability, transforming scholarly information into a technology-driven industry that would highly depend on big data and ML [97,98].

Future Prospects

- AI has a long trail to cross in this intellectual domain, as it has to find a suitable position in mimicking more, but not all human-only characteristics [93]. Currently, there are various AI-based product choices for scholarly publishing, but the availability of too many products itself may confuse end-users about opting for the appropriate ones.

- Palmer [99], a prominent business consultant and one of LinkedIn’s top 10 technology experts, warns that report writers, journalists, and authors may have to give their jobs to robots soon. There may be hesitancy among professionals in accepting innovation and changes for fear of job loss. Interestingly, history could remind us of similar predictions and warnings when attempts were made to use typewriting machines to replace handwritten materials or when the publishing world slowly moved from paper to online platforms. Hence, change is permanent, but the impact would be on how fast the scientific community adapts to advanced AI technologies. More importantly, the results of the 2019 Gould Finch and Frankfurter Buchmesse’s survey [11] have discredited the assumption that AI will take over the job roles of writers and editors. The authors of that survey report are convinced that AI implementation does not result in job cuts; instead, it reinforces and supports the human workforce. This is a prime period to promote human-machine collaboration through training and preparation to augment value creation and improve performance and delivery.

Conclusion

-

Conflict of Interest

No potential conflict of interest relevant to this article was reported. The opinions provided in this manuscript reflect the authors’ personal views and do not represent that of their affiliated organizations.

-

Funding

The authors received no financial support for this article.

Notes

- 1. Marr B. The key definitions of artificial intelligence (AI) that explain its importance. Forbes [Internet]; 2018 Feb. 14. [cited 2021 Apr 4]. Available from: https://www.forbes.com/sites/bernardmarr/2018/02/14/the-key-definitionsof-artificial-intelligence-ai-that-explain-its-importance/.

- 2. Copeland BJ. Artificial intelligence. Encyclopaedia Britannica [Internet]; 2020 Aug. 11. [cited 2021 Apr 4]. Available from: https://www.britannica.com/technology/artificial-intelligence.

- 3. Awalegaonkar K, Berkey R, Douglass G, Reilly A. AI: built to scale. From experimental to exponential. Accenture Applied Intelligence [Internet]; 2019 Nov. 14. [cited 2021 Apr 4]. Available from: https://www.accenture.com/_acnmedia/Thought-Leadership-Assets/PDF-2/Accenture-Built-toScale-PDF-Report.pdf.

- 4. McKinsey Analytics. The state of AI in 2020. McKinsey & Company [Internet]; 2020 Nov. 17. [cited 2021 Apr 4]. Available from https://www.mckinsey.com/business-functions/mckinsey-analytics/our-insights/global-survey-the-state-of-ai-in-2020.

- 5. Duranton S, Erlebach J, Pauly M. Mind the (AI) gap: leadership makes the difference. Boston Consulting Group [Internet]; 2018 Dec. [cited 2021 Apr 4]. Available from: https://image-src.bcg.com/Images/Mind_the%28AI%29Gap-Focus_tcm9-208965.pdf.

- 6. True Anthem. How artificial intelligence can make publishing more profitable. True Anthem [Internet]; 2017 May. [cited 2021 Apr 4]. Available from: https://www.trueanthem.com/wp-content/uploads/2017/05/True_Anthem_Whitepaper_V1.pdf.

- 7. Kim K. Artificial intelligence and publishing. Sci Ed 2019;6:89-90.https://doi.org/10.6087/kcse.168. Article

- 8. Springer Nature. Springer Nature publishes its first machinegenerated book. Springer Nature [Internet]; 2019 April. 2. [cited 2021 Apr 4]. Available from: https://www.springer.com/gp/about-springer/media/press-releases/corporate/springer-nature-machine-generated-book/16590126.

- 9. Soyer P. Medical writing and artificial intelligence. Diagn Interv Imaging 2019;100:1-2.https://doi.org/10.1016/j.diii.2018.12.003. ArticlePubMed

- 10. Costa S. Embracing a new friendship: artificial intelligence and medical writers. Med Writ 2019;28:14-7.

- 11. Lovrinovic C, Volland H. The future impact of artificial intelligence on the publishing industry. Gould Finch; Frankfurter Buchmesse [Internet]; 2019 Oct. [cited 2021 Apr 4]. Available from: https://www.buchmesse.de/files/media/pdf/White_Paper_AI_Publishing_Gould_Finch_2019_EN.pdf.

- 12. Parisis N. Medical writing in the era of artificial intelligence. Med Writ 2019;28:4-9.

- 13. Dimensions. Publications by year: 2019 and 2020. Dimensions [Internet]; [cited 2021 Apr 4]. Available from: https://app.dimensions.ai/discover/publication.

- 14. White K. Publications output: U.S. trends and international comparisons. Publication output, by region, country, or economy. National Science Foundation [Internet]; 2018 [cited 2021 Apr 4]. Available from: https://ncses.nsf.gov/pubs/nsb20206/publication-output-by-region-country-or-economy.

- 15. Primer. COVID-19 Primer: dashboard. Primer.ai [Internet]; 2021 [cited 2021 Apr 4]. Available from: https://covid19primer.com/dashboard.

- 16. COVID Scholar. COVID-19 literature search powered by advanced NLP algorithms. COVID Scholar [Internet]; [cited 2021 Apr 4]. Available from: https://www.covidscholar.org/.

- 17. Enago. CLARA: COVID-19 learning and research accelerator. Enago [Internet]; [cited 2021 Apr 4]. Available from: https://www.enago.com/covid/.

- 18. Schoeb D, Suarez-Ibarrola R, Hein S, et al. Use of artificial intelligence for medical literature search: randomized controlled trial using the Hackathon format. Interact J Med Res 2020;9:e16606. https://doi.org/10.2196/16606. ArticlePubMedPMC

- 19. Wu EQ, Royer J, Ayyagari R, Signorovitch J, Thokala P. PCP25: artificial intelligence assisted literature reviews: key considerations for implementation in health care research. Value Health 2018;21(Suppl 3):S85. https://doi.org/10.1016/j.jval.2018.09.500. Article

- 20. Semantic Scholar. About Semantic Scholar: helping scholars discover new insights. Semantic Scholar [Internet]; [cited 2021 Apr 4]. Available from: https://pages.semanticscholar.org/about-us.

- 21. Wizdom.ai. Intelligence for researchers. Wizdom.ai [Internet]; 2019 Oct. 16. [cited 2021 Apr 4]. Available from: https://www.wizdom.ai/#researchers.

- 22. Extance A. How AI technology can tame the scientific literature. Nature 2018;561:273-4.https://doi.org/10.1038/d41586-018-06617-5. ArticlePubMed

- 23. Lindsey M. Omnity.io semantically maps documents in 100+ languages to enable multilingual research & discovery. CISION PR Newswire [Internet]; 2016 Dec. 15. [cited 2021 Apr 4]. Available from: https://www.prnewswire.com/news-releases/omnityio-semantically-maps-documents-in-100-languages-to-enable-multilingual-research--discovery-300376975.html.

- 24. Betts C, Power J, Ammar W. GrapAL: connecting the dots in scientific literature. arXiv:1902.05170v2 [Preprint]. 2019 May 19 [cited 2021 Apr 4]. Available from: https://arxiv.org/abs/1902.05170v2. Article

- 25. Marshall IJ, Wallace BC. Toward systematic review automation: a practical guide to using machine learning tools in research synthesis. Syst Rev 2019;8:163. https://doi.org/10.1186/s13643-019-1074-9. ArticlePubMedPMC

- 26. Gooch P. How reviewers can use AI right now to make peer review easier: technology to help reviewers keep on top of the growth in submissions. Scholarcy [Internet]; 2020 Dec. 6. [cited 2021 Apr 4]. Available from: https://www.scholarcy.com/how-reviewers-can-use-ai-right-now-to-make-peer-review-easier/.

- 27. Liechti R, George N, Gotz L, et al. SourceData: a semantic platform for curating and searching figures. Nat Methods 2017;14:1021-2.https://doi.org/10.1038/nmeth.4471. ArticlePubMed

- 28. Zhao Y, Prabhashini K. Applications of artificial intelligence in digital publishing industry in China. Paper presented at: The 3rd International Conference on Robotics and Automation Sciences. 2019 Jun 1-3; Wuhan, China. 254-9.https://doi.org/10.1109/ICRAS.2019.8808984. Article

- 29. Malewar A. Can science writing be automated? Tech Explororist [Internet]; 2019 April. 18. [cited 2021 Apr 4]. Available from: https://www.techexplorist.com/can-science-writing-be-automated/22345/.

- 30. Tatalovic M. AI writing bots are about to revolutionise science journalism: we must shape how this is done. J Sci Commun 2018;17:https://doi.org/10.22323/2.17010501. Article

- 31. G2 Research. Compare AI writing assistant software. G2 [Internet]; [cited 2021 Apr 4]. Available from: https://www.g2.com/categories/ai-writing-assistant.

- 32. Rao M, Gain A, Bhat S. Usage of Grammarly: online grammar and spelling checker tool at the health sciences library, manipal academy of higher education, manipal: a study. Libr Philos Pract 2019;2610.

- 33. PerfectIt. Product introduction. PerfectIt [Internet]; [cited 2021 Apr 4]. Available from: https://intelligentediting.com/product/introduction/.

- 34. ProWritingAid. App features. ProWritingAid [Internet]; [cited 2021 Apr 4]. Available from: https://prowritingaid.com/en/App/Features.

- 35. Trinka. Key features of Trinka. Crimson.ai [Internet]; [cited 2021 Apr 4]. Available from: https://www.trinka.ai/features/.

- 36. AuthorOne. Over 60 checks for complete readiness assessment. Enago AuthorOne [Internet]; [cited 2021 Apr 4]. Available from: https://www.authorone.ai/features.htm.

- 37. Pavlek T. SciNote can write a draft of your scientific manuscript using artificial intelligence. SciNote [Internet]; 2017 Nov. 18. [cited 2021 Apr 4]. Available from: https://www.scinote.net/scinote-can-write-draft-scientific-manuscript-using-artificial-intelligence.

- 38. Moltzau A. Artificial intelligence and citation patterns. Medium’s The StartUp [Internet]; 2019 Oct. 16. [cited 2021 Apr 4]. Available from: https://medium.com/swlh/artificialintelligence-and-citation-patterns-7d7087d61106.

- 39. Scite.ai. Smart citations for better research. Scite.ai [Internet]; [cited 2021 Apr 4]. Available from: https://scite.ai/.

- 40. Towfiq H. How smart is our SmartSearch? Sciwheel Blog [Internet]; 2020 Jun. 9. [cited 2021 Apr 4]. Available from: https://www.sciwheel.com/blog/how-smart-is-our-smartsearch/.

- 41. Gadd E. AI-based citation evaluation tools: good, bad or ugly? The Bibliomagician [Internet]; 2020 Jul. 23. [cited 2021 Apr 4]. Available from: https://thebibliomagician.wordpress.com/2020/07/23/ai-based-citation-evaluation-tools-good-bad-or-ugly/.

- 42. Connected Papers. Explore connected papers in a visual graph. Connected Papers [Internet]; [cited 2021 Apr 4]. Available from: https://www.connectedpapers.com/.

- 43. Meta Help Center. Will Meta work with my reference manager? Meta [Internet]; [cited 2021 Apr 4]. Available from: https://help.meta.org/hc/en-us/articles/360015344832-Will-Meta-work-with-my-reference-manager.

- 44. EndNote. Accelerate your research with EndNote 20. Clarivate EndNote [Internet]; [cited 2021 Apr 4]. Available from: https://endnote.com/product-details/compare-previous-versions.

- 45. Citavi. The only all-in-one referencing and note-taking solution. Citavi [Internet]; [cited 2021 Apr 4]. Available from: https://www.citavi.com/en.

- 46. JabRef. Stay on top of your literature. JabRef [Internet]; [cited 2021 Apr 4]. Available from: https://www.jabref.org/#features.

- 47. Springer. CiteULike: everyone’s library. Springer Nature [Internet]; [cited 2021 Apr 4]. Available from: https://www.springer.com/about+springer/citeulike?SGWID=0-164102-0-0-0.

- 48. Altbach PG, de Wit H. Too much academic research is being published. University World News [Internet]; 2018 Sep. 7. [cited 2021 Apr 4]. Available from: https://www.universityworldnews.com/post.php?story=20180905095203579#:~:text=No%20one%20knows%20how%20many,million%20articles%20published%20each%20year. Article

- 49. Laakso M, Matthias L, Jahn N. Open is not forever: a study of vanished open access journals. J Assoc Inf Sci Technol; 2021 Feb. 21. https://doi.org/10.1002/asi.24460. Article

- 50. Duc NM, Hiep DV, Thong PM, et al. Predatory open access journals are indexed in reputable databases: a revisiting issue or an unsolved problem. Med Arch 2020;74:318-22.https://doi.org/10.5455/medarh.2020.74.318-322. ArticlePubMedPMC

- 51. Suiter AM, Sarli CC. Selecting a journal for publication: criteria to consider. Mo Med 2019;116:461-5.PubMedPMC

- 52. Mukherjee D. 11 Reasons why research papers are rejected. Typeset Blog [Internet]; 2018 Mar. 7. [cited 2021 Apr 4]. Available from: https://blog.typeset.io/11-reasons-why-researchpapers-are-rejected-3e272b633186.

- 53. Enago. Open access journal finder: find the best suited English journals for your paper. Enago [Internet]; [cited 2021 Apr 4]. Available from: https://www.enago.com/academy/journal-finder/.

- 54. FindMyJournal. Find the best journals. FindMyJournal [Internet]; [cited 2021 Apr 4]. Available from: https://www.findmyjournal.com/.

- 55. EndNote. Introducing: Manuscript Matcher. Clarivate EndNote [Internet]; [cited 2021 Apr 4]. Available from: https://endnote.com/product-details/manuscript-matcher/.

- 56. JournalFinder. Find the perfect journal for your article. Elsevier [Internet]; [cited 2021 Apr 4]. Available from: https://journalfinder.elsevier.com/about.

- 57. Bailey J. The world’s first “plagiarism” case. PlagiarismToday [Internet]; 2011 Oct. 4. [cited 2021 Apr 4]. Available from: https://www.plagiarismtoday.com/2011/10/04/the-world%E2%80%99s-first-plagiarism-case/.

- 58. Enago Academy. How to avoid plagiarism in research papers (part 1). Enago [Internet]; 2020 Nov. 24. [cited 2021 Apr 4]. Available from: https://www.enago.com/academy/how-to-avoid-plagiarism-in-research-papers/.

- 59. Kumar A. The role of AI in checking plagiarized text. G2 Learning Hub [Internet]; 2020 Mar. 3. [cited 2021 Apr 4]. Available from: https://learn.g2.com/ai-for-plagiarism.

- 60. Bansal S. Role of artificial intelligence in plagiarism detection. Analytix Labs [Internet]; [cited 2021 Apr 4]. Available from: https://www.analytixlabs.co.in/blog/artificial-intelligence-in-plagiarism-detection/.

- 61. Subroto IM, Selamat A. Plagiarism detection through internet using hybrid artificial neural network and support vectors machine. Telkomnika 2014;12:209-18.http://dx.doi.org/10.12928/telkomnika.v12i1.4. Article

- 62. Gang L, Quan Z, Guang L. Cross-language plagiarism detection based on WordNet. Paper presented at: The 2nd International Conference on Innovation in Artificial Intelligence (ICIAI 2018). 2018 Mar 9-12; Shanghai, China. 163-8.https://doi.org/10.1145/3194206.3194222. Article

- 63. Altheneyan A, Menai ME. Evaluation of state-of-the-art paraphrase identification and its application to automatic plagiarism detection. Int J Pattern Recognit Artif Intell 2020;34:2053004. https://doi.org/10.1142/S0218001420530043. Article

- 64. Sahu M. Plagiarism detection using artificial intelligence technique in multiple files. Int J Sci Tech Res 2016;5:111-4.

- 65. Chitra A, Rajkumar A. Plagiarism detection using machine learning-based paraphrase recognizer. J Intell Syst 2016;25:351-9.https://doi.org/10.1515/jisys-2014-0146. Article

- 66. CopyLeaks. Proving originality, promoting integrity, preventing plagiarism, and protecting you. CopyLeaks [Internet]; [cited 2021 Apr 4]. Available from: https://copyleaks.com/.

- 67. PaperRater. Artificial intelligence. PaperRater [Internet]; [cited 2021 Apr 4]. Available from: https://www.paperrater.com/page/artificial-intelligence.

- 68. Preusler C. iThenticate: a cloud-based platform that helps organizations protect their reputations by detecting plagiarism before publication. HostingAdvice [Internet]; 2019 Jan. 15. [cited 2021 Apr 4]. Available from: https://www.hostingadvice.com/blog/ithenticate-helps-organizations-detect-plagiarism/.

- 69. Sydney F. Machine learning and AI for plagiarism detection. Alteryx [Internet]; 2019 Jun. 6. [cited 2021 Apr 4]. Available from: https://community.alteryx.com/t5/Data-Science/Machine-Learning-and-AI-for-Plagiarism-Detection/ba-p/411072.

- 70. Kovanis M, Porcher R, Ravaud P, Trinquart L. The global burden of journal peer review in the biomedical literature: strong imbalance in the collective enterprise. PLoS One 2016;11:e0166387. https://doi.org/10.1371/journal.pone.0166387. ArticlePubMedPMC

- 71. Levin JM, Oprea TI, Davidovich S, et al. Artificial intelligence, drug repurposing and peer review. Nat Biotechnol 2020;38:1127-31.https://doi.org/10.1038/s41587-020-0686-x. ArticlePubMed

- 72. Cyranoski D. Artificial intelligence is selecting reviewers in China. Nature 2019;569:316-7.https://doi.org/10.1038/d41586-019-01517-8. ArticlePubMed

- 73. Frontiers Science. Artificial intelligence to help meet global demand for high-quality, objective peer-review in publishing. Frontiers Science News [Internet]; 2020 Jul. 1. [cited 2021 Apr 4]. Available from: https://blog.frontiersin.org/2020/07/01/artificial-intelligence-to-help-meet-global-demand-for-high-quality-objective-peer-review-in-publishing/.

- 74. Editage Insights. The first AI-powered manuscript submission marketplace connecting authors and journals. Editage Insights [Internet]; 2019 Sep. 5. [cited 2021 Apr 4]. Available from: https://www.editage.com/insights/the-first-ai-powered-manuscript-submission-marketplace-connecting-authors-and-journals.

- 75. ripetaReview. ripetaReview: Built for intuitive use. ripetaReview [Internet]; [cited 2021 Apr 4]. Available from: https://ripeta.com/products/ripetareview/.

- 76. Ghosal T, Verma R, Ekbal A, Saha S, Bhattacharyya P. An AI aid to the editors: exploring the possibility of an AI assisted article classification system. arXiv:1802.01403v2 [Preprint]. 2018 Feb 17 [cited 2021 Apr 4]. Available from: https://arxiv.org/pdf/1802.01403v2.pdf.

- 77. Mrowinski MJ, Fronczak P, Fronczak A, Ausloos M, Nedic O. Artificial intelligence in peer review: how can evolutionary computation support journal editors? PLoS One 2017;12:e0184711. https://doi.org/10.1371/journal.pone.0184711. ArticlePubMedPMC

- 78. Heaven D. AI peer reviewers unleashed to ease publishing grind. Nature 2018;563:609-10.https://doi.org/10.1038/d41586-018-07245-9. ArticlePubMed

- 79. Nuijten MB, Hartgerink CHJ, van Assen MA, Epskamp S, Wicherts JM. The prevalence of statistical reporting errors in psychology (1985–2013). Behav Res Methods 2016;48:1205-26.https://doi.org/10.3758/s13428-015-0664-2. ArticlePubMed

- 80. StatReviewer. Automated statistical support for journals and authors. StatReviewer [Internet]; [cited 2021 Apr 4]. Available from: http://www.statreviewer.com/.

- 81. Kim S, Choi H, Kim N, Chung E, Lee JY. Comparative analysis of manuscript management systems for scholarly publishing. Sci Ed 2018;5:124-34.https://doi.org/10.6087/kcse.137. Article

- 82. Upshall M. UNSILO survey reveals significant potential for improving scholarly journal submission workflows. UNSILO [Internet]; 2020 Jan. 15. [cited 2021 Apr 4]. Available from: https://unsilo.ai/2020/01/15/unsilo-survey-reveals-significant-potential-for-improving-scholarly-journal-submission-workflows/.

- 83. UNSILO. Press Release: UNSILO, a Cactus Communications company, announces full integration of technical checks tool evaluate with ScholarOne. UNSILO [Internet]; 2020 Mar. 4. [cited 2021 Apr 4]. Available from: https://unsilo.ai/wp-content/uploads/2020/04/Press-Release-UNSILO-Clarivate-technical-checks-integration-rev.pdf.

- 84. Baishideng Publishing Group. BPG: committed to discovery and dissemination of knowledge. Baishideng Publishing Group [Internet]; [cited 2021 Apr 4]. Available from: https://www.wjgnet.com/bpg.

- 85. Pubstrat. Pubstrat medical affairs software suite. Anju Life Sciences [Internet]; [cited 2021 Apr 4]]. Available from: https://www.anjusoftware.com/solutions/medical-affairs/pubstrat.

- 86. Aries Systems. Press release: artificial intelligence integration allows publishers a first look at meta bibliometric intelligence. Aries Systems [Internet]; 2016 Oct. 17. [cited 2021 Apr 4]. Available from: https://www.ariessys.com/views-press/press-releases/artificial-intelligence-integration-allows-publishers-first-look-meta-bibliometric-intelligence/.

- 87. Cenveo Publisher Services. How artificial intelligence & natural language processing are transforming scholarly communications. KnowledgeWorks Global Ltd [Internet]; 2019 [cited 2021 Apr 4]. Available from: https://www.kwglobal.com/ai-and-nlp-for-publishers.

- 88. UNSILO. Rethinking publishing with AI. UNSILO [Internet]; [cited 2021 Apr 4]. Available from: https://unsilo.ai/.

- 89. Wang K. Opportunities in open science with AI. Front Big Data 2019;2:26. https://doi.org/10.3389/fdata.2019.00026. ArticlePubMedPMC

- 90. Huang A. The era of artificial intelligence and big data provides knowledge services for the publishing industry in China. Publ Res Q 2019;35:164-71.https://doi.org/10.1007/s12109-018-9616-x. Article

- 91. Spurlock K, Jernigan B. Artificial intelligence in manuscript preparation. Hindawi Blog [Internet]; 2020 Oct. 06. [cited 2021 Apr 4]. Available from: https://www.hindawi.com/post/artificial-intelligence-manuscript-preparation/.

- 92. Panter M. Proofreading academic writing: human vs. machine. AJE Scholar [Internet]; [cited 2021 Apr 4]. Available from: https://www.aje.com/arc/proofreading-academic-writing-human-vs-machine/.

- 93. Gabriel A. Artificial intelligence in scholarly communications: an Elsevier case study. Inf Serv Use 2019;39:319-33.https://doi.org/10.3233/ISU-190063. Article

- 94. Baffy G, Burns MM, Hoffmann B, et al. Scientific authors in a changing world of scholarly communication: what does the future hold? Am J Med 2020;133:26-31.https://doi.org/10.1016/j.amjmed.2019.07.028. ArticlePubMed

- 95. Palayew A, Norgaard O, Safreed-Harmon K, Anderson TH, Rasmussen LN, Lazarus JV. Pandemic publishing poses a new COVID-19 challenge. Nat Hum Behav 2020;4:666-9.https://doi.org/10.1038/s41562-020-0911-0. ArticlePubMed

- 96. DeVoss CC. What might peer review look like in 2030? figshare [Internet]; 2017 May. 2. [cited 2021 Apr 4]. https://doi.org/10.6084/m9.figshare.4884878.v1. Article

- 97. HighWire. The potential impact of artificial intelligence on the scholarly publishing ecosystem. HighWire [Internet]; 2018 Jan. 15. [cited 2021 Apr 4]. Available from: https://www.highwirepress.com/insight/the-potential-impact-of-artificial-intelligence-on-the-scholarly-publishing-ecosystem/.

- 98. Impelsys. Artificial intelligence leading the change in academic publishing. Impelsys Blog [Internet]; 2019 Feb. 6. [cited 2020 Apr 4]. Available from https://www.impelsys.com/artificial-intelligence-leading-change-academic-publishing/.

- 99. Palmer S. The 5 jobs robots will take first. LinkedIn Pulse [Internet]; 2017 Feb. 26. [cited 2021 Apr 4]. Available from: https://www.linkedin.com/pulse/5-jobs-robots-take-firstshelly-palmer/.

References

Figure & Data

References

Citations

- Navigating the impact: a study of editors’ and proofreaders’ perceptions of AI tools in editing and proofreading

Islam Al Sawi, Ahmed Alaa

Discover Artificial Intelligence.2024;[Epub] CrossRef - Beyond Plagiarism: ChatGPT as the Vanguard of Technological Revolution in Research and Citation

Hanni B. Flaherty, Jackson Yurch

Research on Social Work Practice.2024;[Epub] CrossRef - Recent Issues in Medical Journal Publishing and Editing Policies: Adoption of Artificial Intelligence, Preprints, Open Peer Review, Model Text Recycling Policies, Best Practice in Scholarly Publishing 4th Version, and Country Names in Titles

Sun Huh

Neurointervention.2023; 18(1): 2. CrossRef - Artificial intelligence-assisted medical writing: With greater power comes greater responsibility

Rhythm Bains

Asian Journal of Oral Health and Allied Sciences.2023; 13: 2. CrossRef - Emergence of the metaverse and ChatGPT in journal publishing after the COVID-19 pandemic

Sun Huh

Science Editing.2023; 10(1): 1. CrossRef - Author-Profile-Based Journal Recommendation for a Candidate Article: Using Hybrid Semantic Similarity and Trend Analysis

Mehmet Yașar Bayraktar, Mehmet Kaya

IEEE Access.2023; 11: 45826. CrossRef - Utilization of artificial intelligence technology in an academic writing class: How do Indonesian students perceive?

Santi Pratiwi Tri Utami, Andayani Andayani, Retno Winarni, Sumarwati Sumarwati

Contemporary Educational Technology.2023; 15(4): ep450. CrossRef - The impact of generative AI tools on researchers and research: Implications for academia in higher education

Abdulrahman M. Al-Zahrani

Innovations in Education and Teaching International.2023; : 1. CrossRef - Slow Writing with ChatGPT: Turning the Hype into a Right Way Forward

Chitnarong Sirisathitkul

Postdigital Science and Education.2023;[Epub] CrossRef - Editorial policies of Journal of Educational Evaluation for Health Professions on the use of generative artificial intelligence in article writing and peer review

Sun Huh

Journal of Educational Evaluation for Health Professions.2023; 20: 40. CrossRef - Current Status of Neurointervention, the Official Journal of the Korean Society of Interventional Neuroradiology

Dae Chul Suh, Sun Huh

Neurointervention.2022; 17(2): 67. CrossRef - Profiles of Technology Use and Plagiarism in High School Education

Juan Carlos Torres-Diaz, Pablo Vicente Torres Carrión, Isidro Marín Gutierrez

SSRN Electronic Journal .2021;[Epub] CrossRef

KCSE

KCSE

PubReader

PubReader ePub Link

ePub Link Cite

Cite